The 2018 cohort of Masters in Research students took their end of module multiple choice exam today. While the class stress about their answers to the questions, it’s a chance for me to learn whether the changes I’ve made to my teaching approaches are working or not. I love data (no surprise) and so building up longitudinal data set is always appreciated. The year waiting for the next sample acquisition, the restricted sample-size and the variability in the samples are all frustrating and hard to control for, but I still like adding to my graphs around this time each year.

Anyway, this year I made a bunch of changes including moving and extending some additional teaching resources to this website rather than as hard copy. One thing the students have always appreciated is having practice questions and so I created a series of online multiple choice quizzes (MCQs, available here). I designed these quizzes thinking that they could reinforce some key teaching points (which is why there are some really simple questions) as well as giving the students something to prepare with.

Panic Mode?

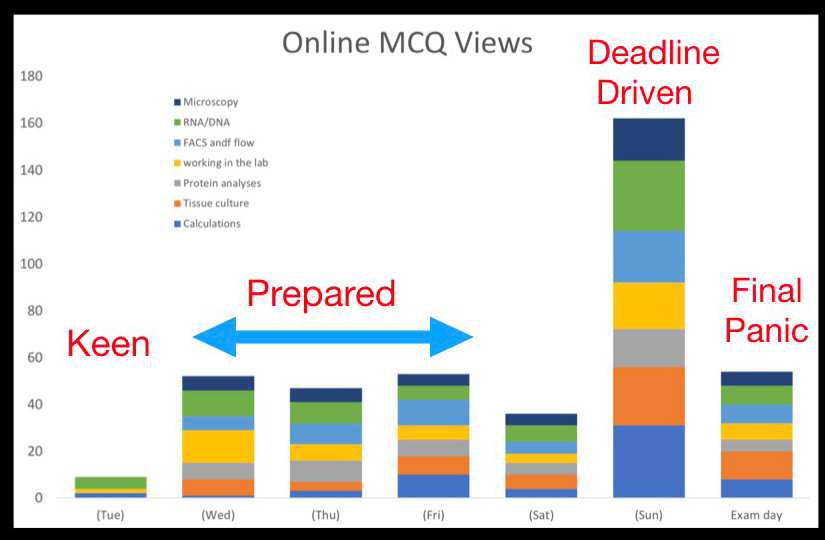

A bonus to putting these new quizzes online is that I get to see the statistics of when they were accessed. And so, on to the point of the post… when do you revise?

If you were wondering; there were 21 students in the class and the average number of views per MCQ in the last week was 59 i.e. roughly 3 views per person per quiz (SD 10). They’re a keen bunch! Over interpreting the data just a little bit reveals that the area that they were most concerned about is RNA/DNA techniques (76 views) and apparently least concerned with microscopy (46). In fairness, the students have lots of resources other than these MCQs, including all the lecture notes, so I imagine that the rapid increase on Sunday night was at least some people testing their knowledge rather than looking over material for the first time!

Although, unsurpringly, there were a load of views yesterday, there was also solid group of views during each of the days at the end of last week I find it quite encouraging that at least half the class weren’t just panic/last minute studying but rather were laying down a solid base. The 50 views this morning are hopefully the same people in last minute panic mode. Do you agree with my analysis?

How did they do?

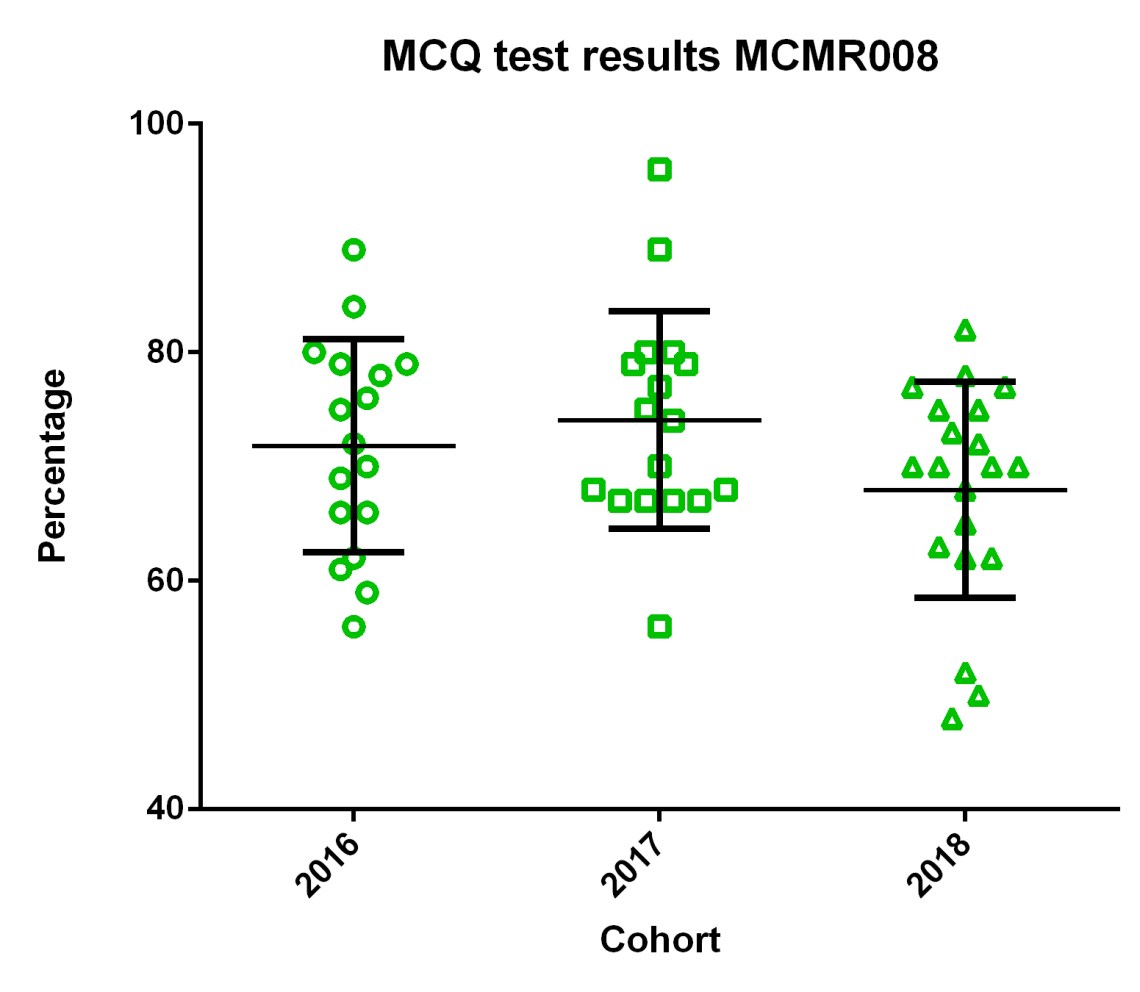

Did the revision help? Did they improve on last year’s cohort? Well, when you consider just the overall grades the answer is…not really! Boo! More specifically, the difference from this year to previous years is too small, and the variability is too large for me to be convinced that the difference hasn’t just occurred by chance.

However, as the exams are also a way to reinforce teaching points, I adjust the content each year. I continue to see these students throughout the year as they give project talks and posters on their research work, from these I get a feel of which parts of my module aren’t being as well absorbed as I would like. These are the teaching points I select to reinforce with the exam and an associated post-exam feedback session where I discuss problem areas.

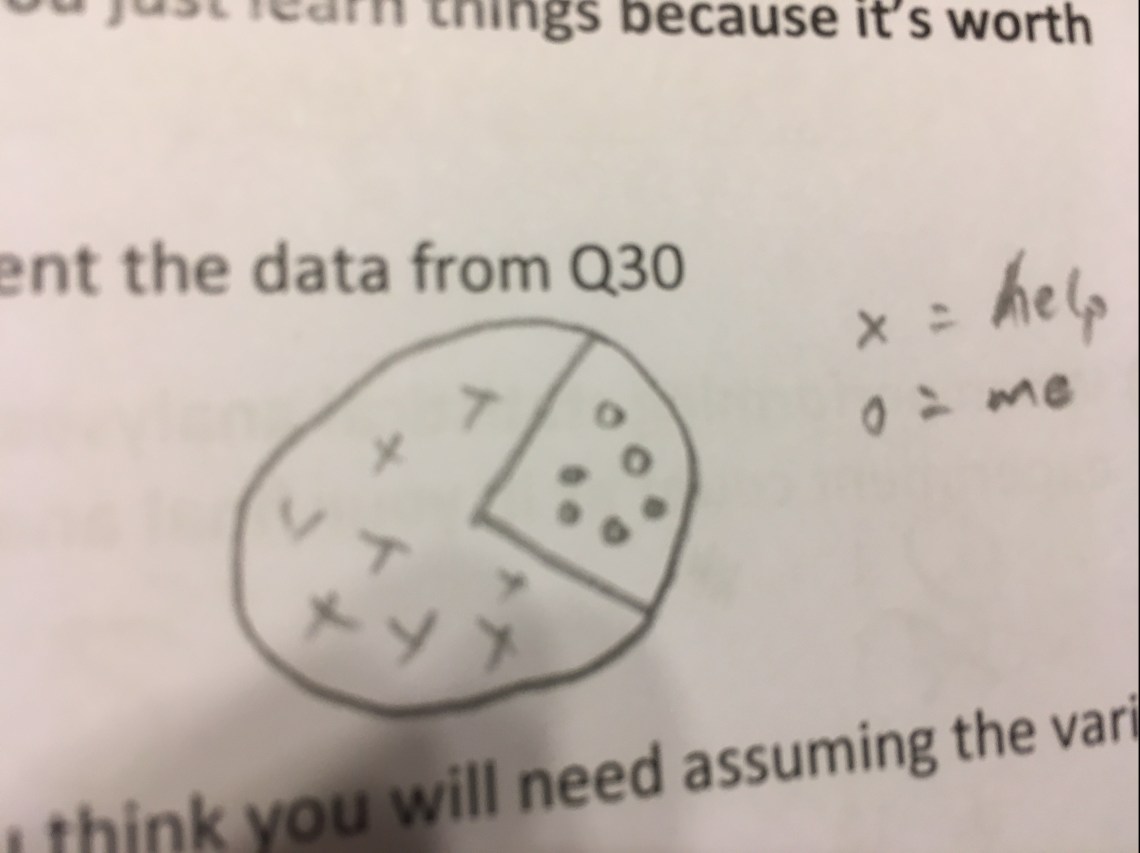

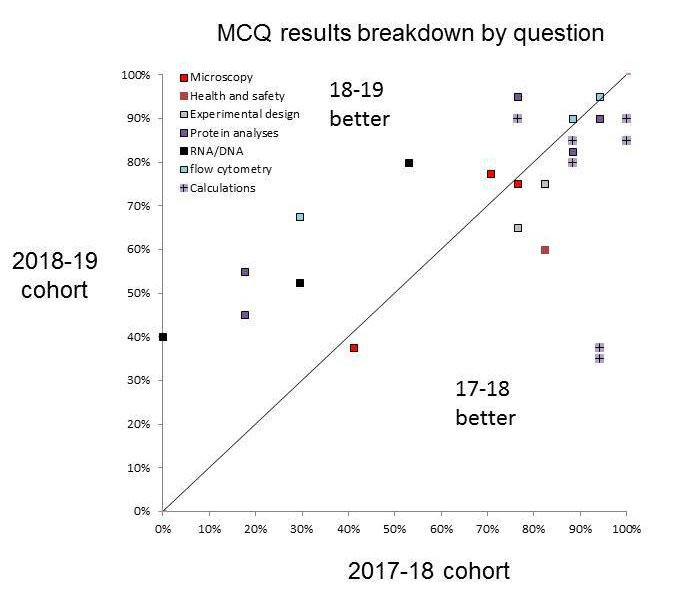

Therefore, looking at just the total mark doesn’t really tell me as much about the class as comparing on an individual question basis. When I do that and directly compare only the questions that fundamentally test the same core concept, the results are more informative and, to an extent, more promising.

From these data I can see a few areas with improvements (above the line) including questions relating to RNA/DNA or protein-based techniques. But, there was also a drop off in the more complicated calculations-type questions. From these data, I will adjust the way we approach teaching next year. I’ll keep the changes I made to the bits that worked and tweak those that performed less well.

And finally, there is potential to make mistakes during marking of exam scripts. Luckily I have a helper to second mark everything for me.

1 Comment